TL;DR

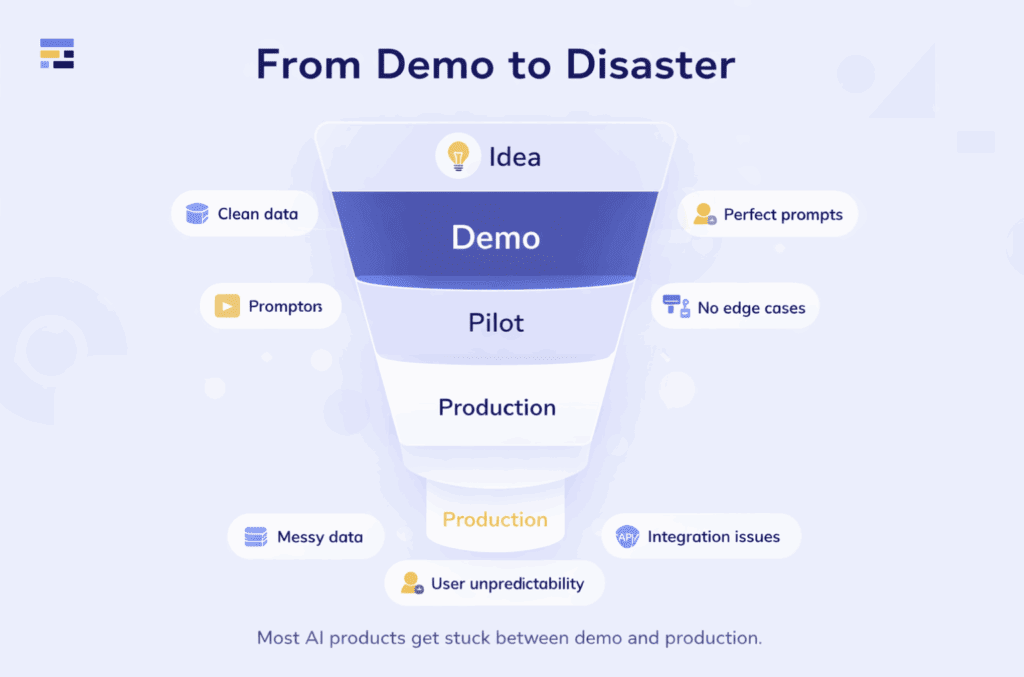

- Most AI apps fail post-demo because production demands reliability, integrations, monitoring, security, and scalability.

- Over 80% of AI projects fail to deliver measurable value; up to 95% of genAI pilots don’t impact business outcomes.

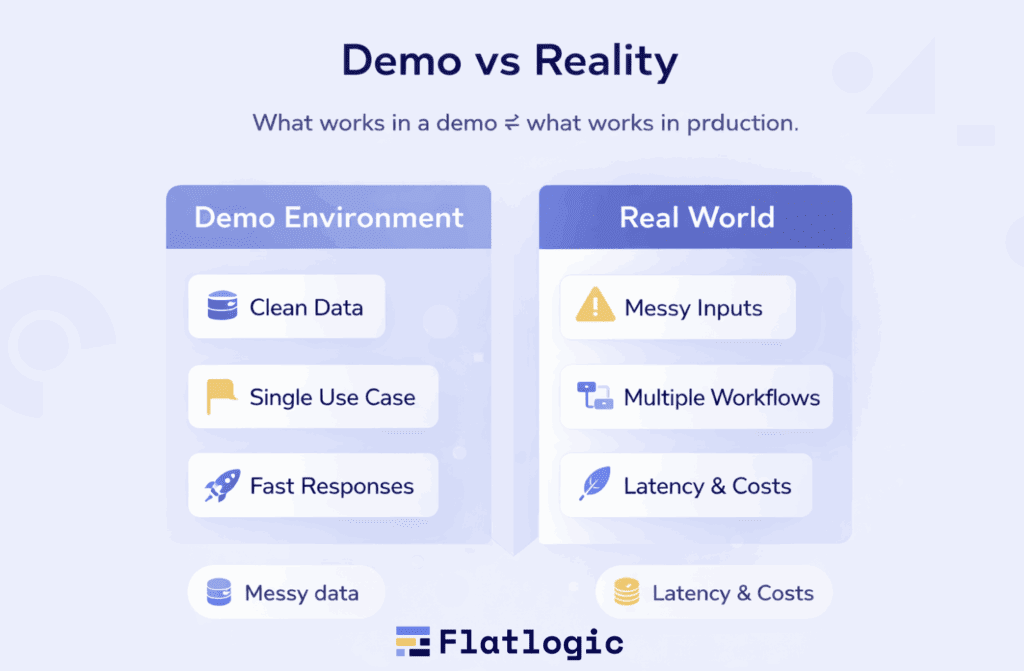

- Demos use clean data and controlled flows; real users bring messy inputs, edge cases, latency, cost, and uptime constraints.

- The 7 common failure causes: weak problem–solution fit, bad data, integration complexity, no prod plan, hype, low adoption, no iteration.

- Successful teams treat AI as a system: start from business outcomes, design integrations early, and run feedback loops for continuous improvement.

Fact Box

- The article states that over 80% of AI projects fail to deliver measurable value, with many stuck at proof-of-concept.

- It claims up to 95% of generative AI pilots fail to impact business outcomes due to poor integration, strategy, and execution.

- It lists 7 reasons AI app builders fail after the first demo, including data issues, integration, adoption, and lack of iteration.

- It argues demos optimize for impressiveness, while production success depends on reliability under messy data, real users, and load.

Most AI apps don’t fail because the demo was bad, they fail because the demo was the only part that worked.

If you’re building an AI-powered product, you’ve probably asked yourself:

- Why does my AI prototype look impressive, but fall apart in production?

- Why do competitors launch flashy demos but never ship real value?

- What does it actually take to turn an AI idea into a scalable product?

- Are AI app builders just hype tools, or can they deliver real ROI?

As Andrew Ng famously said, “AI is the new electricity”. But like electricity, it only creates value when it’s wired into real systems, not just demonstrated in isolation.

The problem is massive and well-documented. Studies show that over 80% of AI projects fail to deliver measurable value, with many never progressing beyond proof-of-concept. Even more striking, recent research suggests that up to 95% of generative AI pilots fail to impact business outcomes, primarily due to poor integration, lack of strategy, and execution gaps. For startups and SMBs, the situation is even harsher: limited resources, weaker data infrastructure, and unrealistic expectations amplify these failure rates.

By reading this article, you’ll understand why most AI app builders fail after the first demo, what separates scalable AI products from “demo-ware,” and how to build AI applications that actually survive in production, especially if you’re an SMB or startup.

Why AI App Builders Look Great in Demos (and Nowhere Else)

At first glance, most AI app builders feel almost magical. In a controlled demo, they respond instantly, generate accurate outputs, and appear ready to replace entire workflows. It creates a powerful impression: if it works this well now, scaling it should be easy. But this is exactly where many teams misjudge reality, because what works in a demo environment is often the least representative version of the product.

The Nature of a Demo: Designed to Impress

A demo is not a real product environment, it is a carefully staged experience. Every element is optimized to highlight strengths and hide weaknesses. Inputs are predictable, data is clean, and the flow is linear. There are no unexpected user behaviors, no broken integrations, and no performance bottlenecks.

This is intentional. A demo exists to answer a single question: “Is this idea possible?” And most AI app builders answer that question very well.

The problem is that possibility is only the starting point. It says nothing about whether the solution can survive real-world complexity.

What Changes in the Real World

The moment an AI app leaves the demo environment, it encounters conditions that are fundamentally different. Instead of clean inputs, it faces messy, inconsistent data. Instead of predictable interactions, it deals with users who phrase things in unexpected ways or use the product incorrectly. Instead of running in isolation, it must operate within an ecosystem of existing tools, APIs, and constraints.

This shift exposes weaknesses that were invisible during the demo.

A system that seemed highly accurate may suddenly produce inconsistent or irrelevant outputs. A workflow that felt seamless may break when it depends on multiple integrations. Latency, cost, and reliability, all irrelevant in a demo, become critical factors.

In short, the AI hasn’t changed. The environment has.

The Hidden Gap Between Prototype and Product

The core issue is not that demos are misleading, it’s that they are incomplete. They validate the intelligence of the system, but not its durability.

In a demo, AI is treated as a feature.

In production, it becomes part of a system.

And systems introduce requirements that demos ignore: stability, scalability, monitoring, error handling, and security. These are not small additions, they are the majority of the work.

This is why many teams find themselves stuck after building a successful prototype. They’ve proven that the AI can generate value under ideal conditions, but they haven’t built the infrastructure needed to deliver that value consistently.

This state is often referred to as “pilot purgatory,” where a product repeatedly demonstrates promise but never fully launches.

Why the Illusion Persists

For startups and SMBs, the impact of this gap is amplified. A strong demo can generate excitement, attract stakeholders, and even secure funding. It creates momentum, and sometimes pressure, to move forward quickly.

But when the product is exposed to real usage and begins to fail, that momentum reverses. Users lose trust. Teams become cautious. What once looked like a breakthrough starts to feel unreliable.

The illusion persists because demos optimize for short-term validation, while real products require long-term consistency. And consistency is much harder to achieve.

From Impressive to Reliable

The difference between a demo and a successful AI product comes down to one idea: reliability over impressiveness. A demo proves that something can work. A product proves that it works every day, under pressure, at scale.

Bridging this gap requires shifting focus away from the visible parts of AI, prompts, outputs, UI, toward the invisible foundations: data quality, system design, integration, and continuous improvement.

These are not the elements that make demos exciting. But they are the ones that determine whether an AI app survives beyond the first impression.

And that is why so many AI app builders look exceptional in a demo, yet struggle to exist anywhere else.

The 7 Reasons Most AI App Builders Fail After the First Demo

Once an AI app successfully passes the demo stage, it enters a much harsher reality, one where technical feasibility is no longer enough. What looked like a promising product quickly collides with business constraints, messy data, and operational complexity. This is the point where most AI app builders fail, not because the idea was wrong, but because the system behind it was never built to last.

Understanding why this happens is critical, especially for SMBs and startups, where every failed initiative carries real cost.

1. No Real Problem-Solution Fit

Many AI products begin with the technology, not the problem. Teams get excited about what AI can do and start building features in search of a use case. The result is often a product that feels impressive but lacks urgency or necessity.

In practice, users don’t adopt tools because they are innovative, they adopt them because they solve something painful. If the AI doesn’t clearly reduce time, cut costs, or measurably improve outcomes, it quickly becomes optional. And optional tools rarely survive.

The demo hides this issue because it focuses on capability, not value. But once real users are involved, the absence of a strong problem-solution fit becomes obvious.

2. The Data Illusion

AI demos are typically powered by clean, structured, and carefully selected datasets. In production, that illusion disappears.

Real-world data is fragmented, inconsistent, and often incomplete. Fields are missing, formats vary, and historical data may not even exist, especially in early-stage startups. Without reliable data, even the most advanced AI models produce unreliable results.

This creates a fundamental disconnect: the AI worked in the demo because the data was ideal. It fails in production because the data is not.

For many teams, this is the first major realization that AI success depends less on the model and more on the data ecosystem behind it.

3. Integration Is the Real Challenge

In demos, AI systems operate in isolation. In reality, they must fit into existing workflows and connect with multiple tools, CRMs, databases, internal systems, and third-party APIs.

This is where complexity grows exponentially.

Every integration introduces dependencies, potential failures, and performance constraints. Authentication, permissions, data synchronization, and API reliability all become critical. None of these challenges is visible in a demo, but they define success in production.

Many AI app builders underestimate this step, focusing heavily on model performance while treating integration as a secondary task. In practice, it is often the hardest part of the entire system.

4. No Clear Path to Production

A successful demo answers the question: “Can this work?”

But production requires answering a different one: “Can this scale and sustain?”

These are not the same problem.

Teams often invest heavily in building a prototype but fail to plan what comes next. Infrastructure, deployment pipelines, monitoring, and maintenance are either overlooked or underestimated. As usage grows, systems become unstable, costs increase, and technical debt accumulates.

Without a clear production strategy, the project stalls. The prototype exists, but it never evolves into a reliable product.

5. Misaligned Expectations About AI

AI is often perceived as a near-magical solution, something that can automate complex processes instantly and perfectly. This expectation is reinforced by polished demos, which show only the best-case scenarios.

In reality, AI systems are probabilistic and imperfect. They require iteration, supervision, and continuous refinement. Outputs may vary, errors will occur, and edge cases are inevitable.

When expectations are not aligned with this reality, disappointment follows. Stakeholders expect immediate ROI, while teams struggle with ongoing improvements. For startups and SMBs with limited runway, this gap can quickly become unsustainable.

6. Lack of Adoption, Even When It Works

Even when an AI solution is technically sound, it can still fail if people don’t use it.

Adoption is often treated as an afterthought, but it is one of the most critical success factors. Users may not trust the system, may not understand how to use it, or may simply prefer existing workflows. Without proper onboarding, training, and workflow integration, even a high-performing AI tool can remain unused.

This highlights a key reality: AI success is not just a technical challenge, it is an organizational one.

7. Static Systems in a Dynamic Environment

Many AI applications are built as if they are static products, launched once and expected to perform consistently over time. But AI systems degrade if they are not actively maintained.

Data changes, user behavior evolves, and business needs shift. Without feedback loops, monitoring, and continuous improvement, performance declines. What once worked well becomes outdated or irrelevant.

Successful AI products are not “set and forget.” They are living systems that learn, adapt, and improve over time.

The Pattern Behind the Failures

Individually, these issues may seem manageable. But together, they reveal a consistent pattern: most AI app builders are optimized for creating impressive first versions, not sustainable products.

They prove that the idea works, but not that it works reliably, repeatedly, and at scale. And that is the real reason so many AI applications fail after the first demo.

Why This Problem Hits SMBs and Startups Hardest

For SMBs and startups, the gap between demo and production isn’t just a technical challenge, it’s a business risk.

Unlike large enterprises, smaller teams don’t have the luxury of absorbing failed experiments. They operate with limited budgets, lean teams, and tight timelines. Every product decision must move the business forward, and every misstep carries a higher cost.

This makes AI especially tricky. A strong demo can create early excitement, but turning it into a reliable product requires additional investment in data, infrastructure, and integration, resources that SMBs often don’t have. When expectations are high and results take longer than anticipated, momentum quickly fades.

There’s also less room for iteration. While large companies can afford multiple AI pilots, startups usually get one real attempt. If that attempt stalls after the demo stage, it can impact not just the product but overall confidence in the direction of the business.

In this environment, success with AI isn’t about building something impressive, it’s about building something that works consistently, delivers value quickly, and fits within real constraints.

The Real Gap: Demo vs. Deployment

The difference between a demo and a real product is where most AI initiatives break down.

A demo proves that something can work under ideal conditions. Deployment proves that it does work, consistently, at scale, and in unpredictable environments. This shift introduces challenges that are invisible during the demo stage but unavoidable in production.

In a demo, everything is controlled: data is clean, workflows are simple, and performance doesn’t matter much. In deployment, the system must handle messy inputs, edge cases, real users, and constant load, all while staying reliable and cost-efficient.

This is the real gap: moving from a controlled showcase to an operational system.

| Demo Stage | Deployment Reality |

| Clean, structured data | Messy, incomplete, inconsistent data |

| Predefined use cases | Multiple, evolving workflows |

| Controlled environment | Unpredictable real-world conditions |

| Limited users or test inputs | High concurrency and diverse usage |

| No strict performance requirements | Latency, uptime, and cost matter |

| Isolated system | Deep integrations with existing tools |

| Minimal security concerns | Compliance, privacy, and risk management |

Bridging this gap requires more than improving the AI itself. It demands building the surrounding infrastructure, data pipelines, integrations, monitoring, and safeguards that ensure the AI delivers value every time it’s used.

Most AI app builders fail not because the demo was wrong, but because they never fully cross this gap.

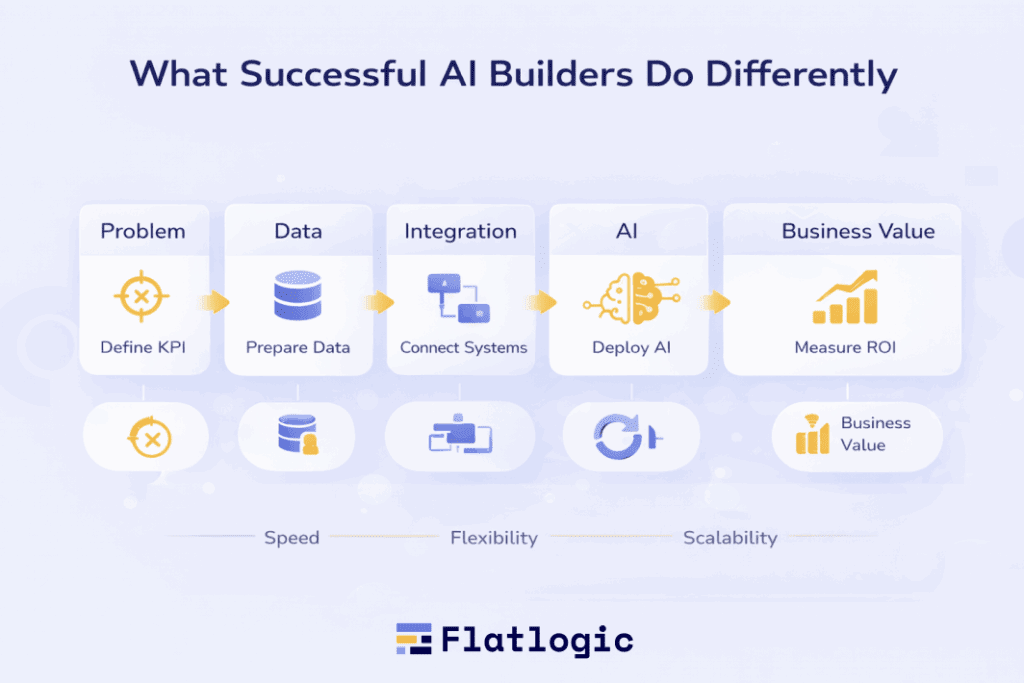

What Successful AI Builders Do Differently

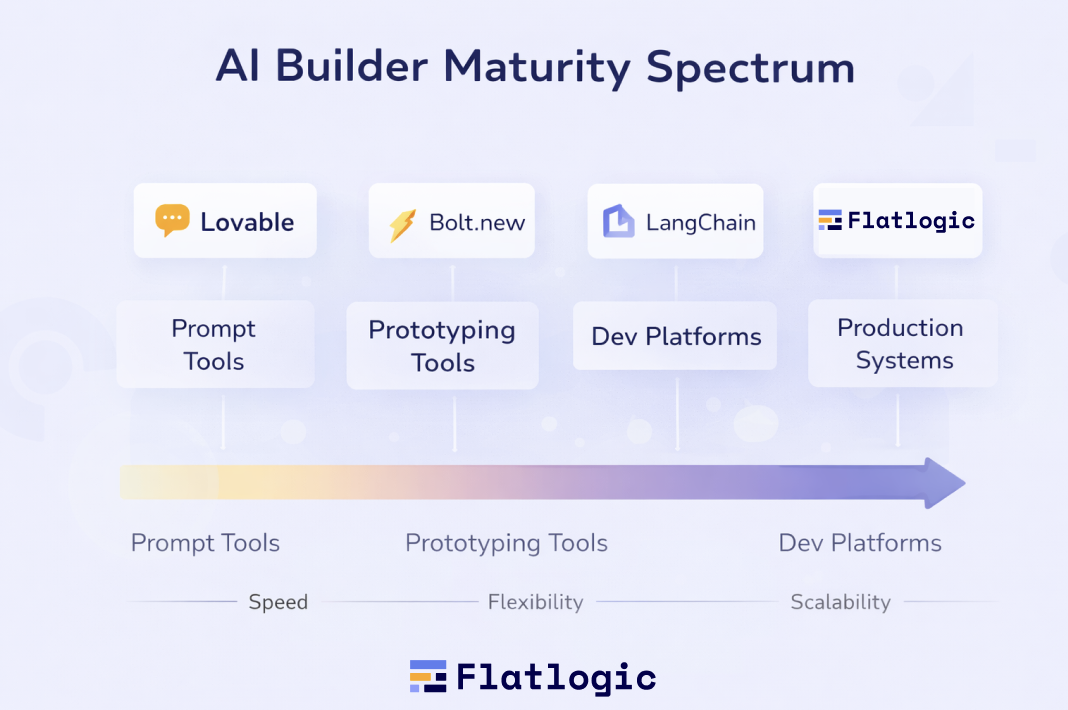

The difference between AI products that stall after a demo and those that succeed in production isn’t the model, it’s the approach. Successful AI builders don’t treat AI as a standalone feature. They treat it as part of a larger system designed to deliver consistent, measurable value.

Instead of optimizing for speed or impressiveness, they optimize for durability. They think beyond the first use case, beyond the first users, and beyond the first success metric. This shift in mindset changes how they design, build, and scale AI applications.

They Build Systems, Not Just Features

The most effective AI builders start with the full picture: backend, database, business logic, and user workflows, not just prompts and outputs.

Platforms like Flatlogic lead this approach by combining AI with production-ready architecture. Instead of generating isolated features, they help teams build complete applications that are structured, scalable, and ready to integrate into real business environments. This reduces one of the biggest risks in AI development, the gap between prototype and production.

They Focus on Business Outcomes First

Successful teams don’t ask, “What can we build with AI?” They ask, “What measurable outcome do we need?”

This means defining success in terms of:

- Time saved

- Costs reduced

- Revenue generated

AI becomes a tool to achieve these outcomes, not the goal itself.

They Prioritize Integration from Day One

Rather than building standalone AI tools, successful builders design solutions that fit into existing ecosystems. They consider how the AI will interact with CRMs, internal systems, APIs, and user workflows from the very beginning. This avoids one of the most common failure points: trying to bolt integration onto a system that was never designed for it.

They Treat AI as an Evolving System

High-performing teams understand that AI is not static. They build feedback loops, monitor performance, and continuously improve outputs based on real usage. This allows the system to adapt over time instead of degrading, a key factor in long-term success.

Examples of AI Builders and Tools

The following table highlights how different tools approach AI app development, and why some are better suited for moving beyond the demo stage.

| Tool | Approach | Strength | Limitation |

| Flatlogic | Full-stack AI app generation (frontend + backend + DB) | Production-ready architecture, faster path to real apps | Requires clear business logic upfront |

| Lovable | Prompt-based full-stack app generation | Extremely fast idea-to-app workflow | Limited control and scalability for complex systems |

| Replit | AI-assisted development environment | Flexible, supports real coding and deployment | Requires technical knowledge for production readiness |

| Bolt.new | Instant app generation from prompts | Rapid prototyping and iteration | Early-stage, limited robustness for large-scale apps |

| Bubble | Visual no-code builder with plugins | Fast prototyping, flexible UI | Scaling and backend complexity can be challenging |

| LangChain | Framework for building LLM-powered apps | Flexible and powerful for custom logic | Requires engineering effort and infrastructure |

What unites successful AI builders is not the specific tool they use, but how they use it. They:

- Start with real problems

- Design for real environments

- Build for long-term use, not short-term validation

Because in the end, success in AI isn’t about launching something impressive. It’s about building something that keeps working long after the demo ends.

Summing Up

AI app builders haven’t failed, but the way most teams use them often does.

The core issue isn’t the technology. Modern AI tools are more powerful and accessible than ever. The real problem is the gap between what is demonstrated and what is delivered. Demos prove potential, but products require reliability, integration, and continuous improvement, and that’s where most efforts fall short.

For SMBs and startups, this gap is especially critical. With limited resources and little room for error, success with AI depends on making the right decisions early: choosing meaningful use cases, building on solid data foundations, and prioritizing systems over standalone features.

The teams that succeed are not the ones with the most advanced models or the flashiest demos. They are the ones who treat AI as part of a larger product, something that must work consistently in real conditions, not just impress in controlled environments.

As the AI landscape matures, the advantage will shift away from those who can build quickly to those who can build sustainably. The winners won’t be defined by what they can show in a demo, but by what continues to deliver value long after launch.

Because in the end, the market doesn’t reward potential. It rewards products that work.